Artificial Intelligence has been advancing at an unprecedented rate, causing fear about whether AI will dominate various aspects of our lives in the near future and “take our jobs.” In this article, by leveraging Moore's Law and other technological growth principles, I’m going to make an educated estimation about the state of AI in the coming years.

Let’s explore these predictions and explain why AI may not completely take over within the next five years, and what the timeline might look like for AI to reach a truly advanced state.

The Current State of AI

AI technology has made significant strides in recent years. From natural language processing to computer vision, AI applications are becoming increasingly sophisticated, whether that’s the recent GPT-4o model, Google’s Gemini, or any one of the models in the race to become the most capable AI model.

There are lots of people using these models in positive ways to unlock new methodologies in research and education, but there are also lots of people using these technologies for evil such as deepfakes. However, despite these advancements, there are still considerable challenges and limitations that need to be addressed. Current AI systems need massive amounts of data, computational power, and energy to function effectively.

Moore's Law and Its Implications for AI

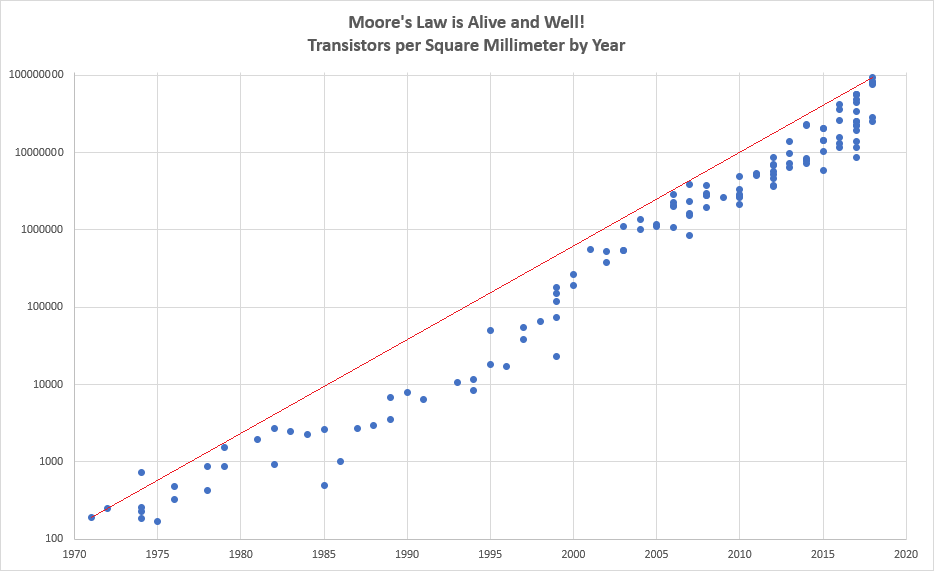

Moore's Law, the observation that the number of transistors on a microchip doubles approximately every two years, has been a reliable predictor of technological progress for decades. More generally, Moore’s Law points to technology getting twice as accessible, cheaper, and efficient every two years. According to Moore's Law, we can expect computational power to continue increasing exponentially.

However, there are physical and economic limits to this growth that may impact the pace of AI development.

Estimating AI's Growth Using Known Laws

By extrapolating from Moore's Law and considering other technological growth trends, we can make predictions about AI's capabilities in the coming years.

Computational Power

If we consider top-of-the-notch GPUs today, let’s say NVIDIA A100, it provides approximately 312 teraflops (TFLOPS) of performance. Moore's Law suggests a doubling of transistors every two years, which implies a potential 8-fold increase in computational power over five years (2^2.5 ≈ 5.66).

Future GPU Performance = 312 TFLOPS × 5.66 ≈ 1766 TFLOPS

However, the physical limitations of silicon and the economic costs of producing ever-smaller transistors could slow this growth. The increase might realistically be closer to 4 times due to these constraints:

Realistic Future GPU Performance=312 TFLOPS×4≈1248 TFLOPS

Data Availability and Quality

According to projections by IDC (International Data Corporation), the size of the global datasphere will increase from 64.2 zettabytes in 2020 to 175 zettabytes by 2025. It is essential for the training of increasingly complex AI models that data is growing exponentially. But there are still a lot of obstacles to overcome, including concerns about data privacy, data quality, and effectively labeling and processing this data.

The availability of high-quality, labeled data is critical, even with an increase in data output. Preprocessing and data labeling are obstacles that will not scale linearly with data increase, according to current trends.

Energy Consumption

AI training models like GPT-3 require substantial energy. GPT-3 used approximately 1,287 MWh to train. GPT-4, developed approximately 3 years after its predecessor required over 50 gigawatt-hours, so around 50 times the amount it took to train GPT-3. Assuming AI models become more complex and require more data and computational power, energy consumption could grow significantly.

If we estimate a similar 50x increase or a 5000% increase in complexity to around 2500 gigawatt-hours for the next model that could be released in the next 5 years, this would require large improvements in energy production and efficiency.

Given current energy efficiency improvements of around 10% per year (source: Lawrence Livermore National Laboratory), this only translates to approximately a 60% increase in energy efficiencies over a 5-year period if the energy production technologies don’t improve significantly.

The Need for Increased Complexity in AI Models

Current AI models struggle with many major issues today.

Lack of Up-to-date Information: Current models are trained on static datasets and do not have real-time access to the internet for the most up-to-date information.

Hallucinations: AI models sometimes generate information that is plausible-sounding but incorrect or nonsensical.

Understanding Context and Nuance: Handling nuanced human language, context, and intent remains a challenge for AI.

To address these issues, future AI models will need to incorporate:

- Real-Time Data Integration: Access to and processing of real-time data from the internet to provide up-to-date responses.

- Improved Model Architectures: Enhancements in model architectures to reduce hallucinations and improve the reliability of generated content.

- Contextual Understanding: Advanced natural language processing techniques to better understand and maintain context over long conversations.

Increased Model Complexity

Solving these problems will significantly increase the complexity of AI models. For example:

- Real-Time Data Integration: Requires constant data updates and real-time processing capabilities.

- Improved Model Architectures: Likely to involve larger and more complex models with more parameters.

- Contextual Understanding: Demands more sophisticated training algorithms and potentially longer training times.

Let's assume each of these improvements independently doubles the complexity of the AI model. If we start with the current complexity (C), integrating these advancements might lead to:

Future Complexity=C×2(real-time data)×2(improved architectures)×2(contextual understanding)=C×2^3 =C×8

Let’s estimate a 5x increase in complexity as a more conservative yet substantial figure.

Data Quality and Ethics

AI systems depend heavily on high-quality, unbiased data for training and decision-making. While the quantity of data is increasing rapidly, the quality and ethical considerations surrounding this data are equally crucial.

Data Generation and Processing

With the increase from 64.2 to 175 zettabytes, data generation will likely outpace the current capabilities of processing and labeling. Ensuring data quality and addressing biases require significant resources. Let's consider the human labor and computational resources needed for data labeling. Assuming 1 hour of human labor is needed to label 1 GB of data, labeling 175 zettabytes would require:

175 ZB×1 hour/GB×10^9 GB/ZB =175×10^9 hours

This is not feasible with current resources, indicating a substantial gap in data quality and ethical processing.

Conclusion: When Will AI Be in a Truly Advanced State In the Next, Say 5 Years?

The idea that AI will "take over" in the next five years seems improbable, even if it will surely continue to advance and become more deeply integrated into many industries. There are several obstacles to overcome, including issues with energy usage, data quality, and processing power limitations. AI will not be able to evolve as fast without significant advancements in energy efficiency, data ethics, and technology.

It seems more reasonable to anticipate that AI will become truly advanced within the next ten to fifteen years, based on existing patterns and reasonable development estimates. This prolonged period enables the development and successful implementation of essential ethical, technological, and resource-based breakthroughs.

Sources and References:

- Moore, G. E. (1965). Cramming more components onto integrated circuits. Electronics, 38(8), 114-117.

- Goodfellow, I., Bengio, Y., & Courville, A. (2016). Deep Learning. MIT Press.

- Markoff, J. (2015). Machines of Loving Grace: The Quest for Common Ground Between Humans and Robots. HarperCollins.

- IDC. (2020). Global DataSphere Forecast 2021-2025.

- Lawrence Livermore National Laboratory. (2021). Energy Efficiency Trends in Computing.