Authors:

(1) An Yan, UC San Diego, [email protected];

(2) Zhengyuan Yang, Microsoft Corporation, [email protected] with equal contributions;

(3) Wanrong Zhu, UC Santa Barbara, [email protected];

(4) Kevin Lin, Microsoft Corporation, [email protected];

(5) Linjie Li, Microsoft Corporation, [email protected];

(6) Jianfeng Wang, Microsoft Corporation, [email protected];

(7) Jianwei Yang, Microsoft Corporation, [email protected];

(8) Yiwu Zhong, University of Wisconsin-Madison, [email protected];

(9) Julian McAuley, UC San Diego, [email protected];

(10) Jianfeng Gao, Microsoft Corporation, [email protected];

(11) Zicheng Liu, Microsoft Corporation, [email protected];

(12) Lijuan Wang, Microsoft Corporation, [email protected].

Editor’s note: This is the part 7 of 13 of a paper evaluating the use of a generative AI to navigate smartphones. You can read the rest of the paper via the table of links below.

Table of Links

- Abstract and 1 Introduction

- 2 Related Work

- 3 MM-Navigator

- 3.1 Problem Formulation and 3.2 Screen Grounding and Navigation via Set of Mark

- 3.3 History Generation via Multimodal Self Summarization

- 4 iOS Screen Navigation Experiment

- 4.1 Experimental Setup

- 4.2 Intended Action Description

- 4.3 Localized Action Execution and 4.4 The Current State with GPT-4V

- 5 Android Screen Navigation Experiment

- 5.1 Experimental Setup

- 5.2 Performance Comparison

- 5.3 Ablation Studies

- 5.4 Error Analysis

- 6 Discussion

- 7 Conclusion and References

4.3 Localized Action Execution

A natural question is how reliable GPT-4V can convert its understanding of the screen into executable actions. Table 1 shows an accuracy of 74.5% on selecting the location that could lead to the desired outcome. Figure 2 shows the added marks with interactive SAM (Yang et al., 2023b; Kirillov et al., 2023), and the corresponding GPT-4V outputs. As shown in Figure 2(a), GPT-4V can select the “X” symbol (ID: 9) to close the tabs, echoing its previous description in Figure 1(a). GPT-4V is also capable of selecting the correct location to click from the large portion of clickable icons, such as the screen shown in (b). Figure 1(c) represents a complicated screen with various images and icons, where GPT-4V can select the correct mark 8 for the reading the “6 Alerts.” Within a screen with various texts, such as (d), GPT-4V can identify the clickable web links, and locate the queried one with the correct position 18.

4.4 The Current State with GPT-4V

From the analytical experiments on iOS screens, we find GPT-4V is capable of performing GUI navigation. Although several types of failure cases still occur, as outlined below, MM-Navigator shows promise for executing multi-screen navigation to fulfill real-world smartphone use cases. We conclude the section with qualitative results on such episode-level navigation queries.

Failure cases. Despite the promising results, GPT-4V does make errors in the zero-shot screen navigation task, as shown in Table 1. These errors are illustrated through representative failure cases as follows. (a) GPT-4V might not generate the correct answer in a single step when the query involves knowledge the model lacks. For example, GPT-4V is not aware that only “GPT-4” can support image uploads, hence it fails to click the “GPT-4” icon before attempting to find the image uploading function. (b) Although usually reliable, GPT-4V might still select the incorrect location. An example of this is selecting the mark 15 for the “ChatGPT” app instead of the correct mark 5. (c) In complex scenarios, GPT-4V’s initial guess might not be correct, such as clicking the “numeric ID 21 for the 12-Hour Forecast” instead of the correct answer of mark 19. (d) When the correct clickable area is not marked, like a “+” icon without any marks, GPT-4V cannot identify the correct location and may reference an incorrect mark instead. Finally, we note that many of those single-step failures may be corrected with iterative explorations, leading to the correct episode-level outcome.

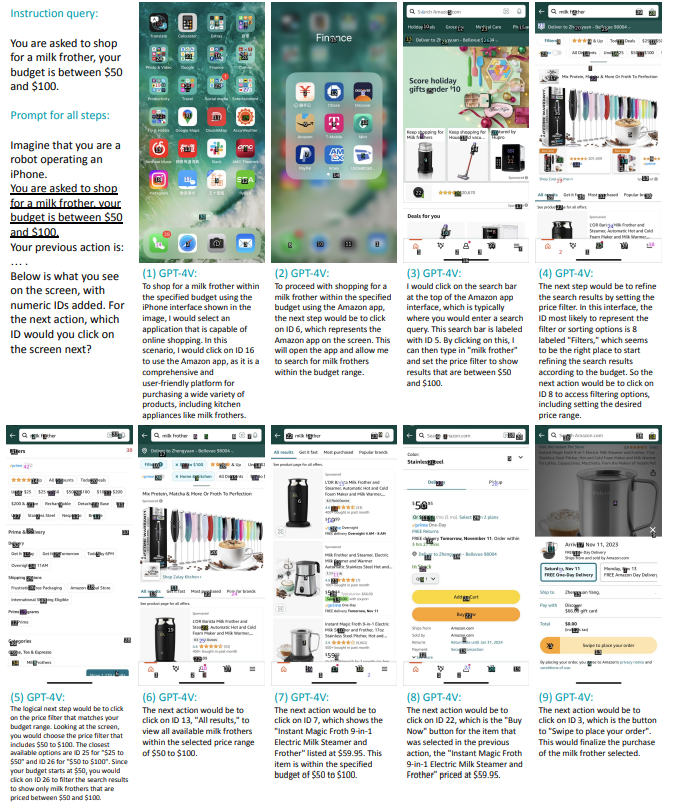

From single screens to complete episodes. MMNavigator shows an impressive capability in performing GUI navigation in a zero-shot manner. We further extend MM-Navigator from processing a single cellphone screen to recursively processing an episode of screen inputs. Figure 4 shows the qualitative result. In each step, we include the objective, “You are asked to shop for a milk frother, your budget is between $50 and $100.” and its previous action in the prompt to GPT-4V. We show that the model can effectively perform multi-step reasoning to accomplish the given shopping instruction.

This paper is available on arxiv under CC BY 4.0 DEED license.